…also known as Day 1 of the BBSRC Synthetic Biology Standards Workshop at Newcastle University, and musings arising from the day’s experiences.

In my relatively short career (approximately 12 years – wait, how long?) in bioinformatics, I have been involved to a greater or lesser degree in a number of standards efforts. It started in 1999 at the EBI, where I worked on the production of the protein sequence database UniProt. Now, I’m working with systems biology data and beginning to look into synthetic biology. I’ve been involved in the development (or maintenance) of a standard syntax for protein sequence data; standardized biological investigation semantics and syntax; standardized content for genomics and metagenomics information; and standardized systems biology modelling and simulation semantics.

(Bear with me – the reason for this wander through memory lane becomes apparent soon.)

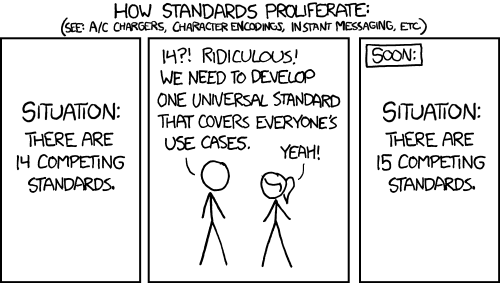

How many standards have you worked on? How can there be multiple standards, and why do we insist on creating new ones? Doesn’t the definition of a standard mean that we would only need one? Not exactly. Take the field of systems biology as an example. Some people are interested in describing a mathematical model, but have no need for storing either the details of how to simulate that model or the results of multiple simulation runs. These are logically separate activities, yet they fall within a single community (systems biology) and are broadly connected. A model is used in a simulation, which then produces results. So, when building a standard, you end up with the same separation: have one standard for the modelling, another for describing a simulation, and a third for structuring the results of a simulation. All that information does not need to be stored in a single location all the time. The separation becomes even more clear when you move across fields.

But this isn’t completely clear cut. Some types of information overlap within standards of a single domain and even among domains, and this is where it gets interesting. Not only do you need a single community talking to each other about standard ways of doing things, but you also need cross-community participation. Such efforts result in even more high-level standards which many different communities can utilize. This is where work such as OBI and FuGE sit: with such standards, you can describe virtually any experiment. The interconnectedness of standards is a whole job (or jobs) in itself – just look at the BioSharing and MIBBI projects. And sometimes standards that seem (at least mostly) orthogonal do share a common ground. Just today, Oliver Ruebenacker posted some thoughts on the biopax-discuss mailing list where he suggests that at least some of BioPAX and SBML share a common ground and might be usefully “COMBINE“d more formally (yes, I’d like to go to COMBINE; no, I don’t think I’ll be able to this year!). (Scroll down that thread for a response by Nicolas Le Novère as to why that isn’t necessarily correct.) So, orthogonality, or the extent to which two or more standards overlap, is sometimes a hard thing to determine.

So, what have I learnt? As always, we must be practical. We should try to develop an elegant solution, but it really, really should be one which is easy to use and intuitive to understand. It’s hard to get to that point, especially as I think that point is (and should be) a moving target. From my perspective, group standards begin with islands of initial research in a field, which then gradually develop into a nascent community. As a field evolves, ‘just-enough’ strategies for storing and structuring data become ‘nowhere-near-enough’. Communication with your peers becomes more and more important, and it becomes imperative that standards are developed.

This may sound obvious, but the practicalities of creating a community standard means such work requires a large amount of effort and continued goodwill. Even with the best of intentions, with every participant working towards the same goal, it can take months – or years – of meetings, document revisions and conference calls to hash out a working standard. This isn’t necessarily a bad thing, though. All voices do need to be heard, and you cannot have a viable standard without input from the community you are creating that standard for. You can have the best structure or semantics in the world, but if it’s been developed without the input of others, you’ll find people strangely reluctant to use it.

Every time I take part in a new standard, I see others like me who have themselves been involved in the creation of standards. It’s refreshing and encouraging. Hopefully the time it takes to create standards will drop as the science community as a whole gets more used to the idea. When I started, the only real standards in biological data (at least that I had heard of) were the structures defined by SWISS-PROT and EMBL/GenBank/DDBJ. By the time I left the EBI in 2006, I could have given you a list a foot long (GO, PSI, and many others), and that list continues to grow. Community engagement and cross-community discussions continue to be popular.

In this context, I can now add synthetic biology standards to my list of standards I’ve been involved in. And, as much as I’ve seen new communities and new standards, I’ve also seen a large overlap in the standardization efforts and an even greater willingness for lots of different researchers to work together, even taking into account the sometimes violent disagreements I’ve witnessed! The more things change, the more they stay the same…

At this stage, it is just a limited involvement, but the BBSRC Synthetic Biology Standards Workshop I’m involved in today and tomorrow is a good place to start with synthetic biology. I describe most of today’s talks in this post, and will continue with another blog post tomorrow. Enjoy!

For those with less time, here is a single sentence for each talk that most resounded with me:

- Mike Cooling: Emphasising the ‘re’ in reusable, and make it easier to build and understand large models from reusable components.

- Neil Wipat: For a standard to be useful, it must be computationally amenable as well as useful for humans.

- Herbert Sauro: Currently there is no formal ontology for synthetic biology, but one will need to be developed.

This meeting is organized by Jen Hallinan and Neil Wipat of Newcastle University. Its purpose is to set up key relationships in the synthetic biology community to aid the development of a standard for that community. Today, I listened to talks by Mike Cooling, Neil Wipat, and Herbert Sauro. I was – unfortunately – unable to be present for the last couple of talks, but will be around again for the second – and final – day of the workshop tomorrow.

Mike Cooling – Bioengineering Institute Auckland, New Zealand

Mike uses CellML (it’s made where he works, but that’s not the only reason…) in his work with systems and synthetic biology models. Among other things, it wraps MathML and partitions the maths, variables and units into reusable pieces. Although many of the parts seem domain specific, CellML itself is actually not domain specific. Further, unlike other modelling languages such as SBML, components in CellML are reusable and can be imported into other models. (Yes, a new package called comp in SBML Level 3 is being created to allow the importing of models into other models, but it isn’t mature – yet.)

How are models stored? There is the CellML repository, but what is out there for synthetic biology? The Registry of Standard Biological Parts was available, but only described physical parts. Therefore they created a Registry of Standard Virtual Parts (SVPs) to complement the original registry. This was developed as a group effort with a number of people including Neil Wipat and Goksel Misirli at Newcastle University.

They start with template mathematical structures (which are little parts of CellML), and then use the import functionality available as part of CellML to combine the templates into larger physical things/processes (‘SVPs’) and ultimately to combine things into system models.

They extended the CellMLRepository to hold the resulting larger multi-file models, which included adding a method of distributed version control and allow the sharing of models between projects through embedded workspaces.

What can these pieces be used for? Some of this work included the creation of a CellML model of the biology represented in Levskaya et al. 2005 and deposit all of the pieces of the model in the CellML repository. Another example is a model he’s working on about shear stress and multi-scale modelling for aneurysms.

Modules are being used and are growing in number, which is great, but he wants to concentrate more at the moment on the ‘re’ of the reusable goal, and make it easier to build and understand large models from reusable components. Some of the integrated services he’d like to have: search and retrieval, (semi-automated) visualization, semantically-meaningful metadata and annotations, and semi-automated composition.

All this work above converges on the importance of metadata. With the CellML Metadata Framework 1.0, not many used it. With version 2.0 they have developed a core specification with is very simple and then provide many additional satellite specifications. For example, there is a biological information satellite, where you use the biomodels qualifiers as relationships between your data and MIRIAM URNs. The main challenge is to find a database that is at the right level of abstraction (e.g. canonical forms of your concept of interest).

Neil Wipat – Newcastle University

Please note Neil Wipat is my PhD supervisor.

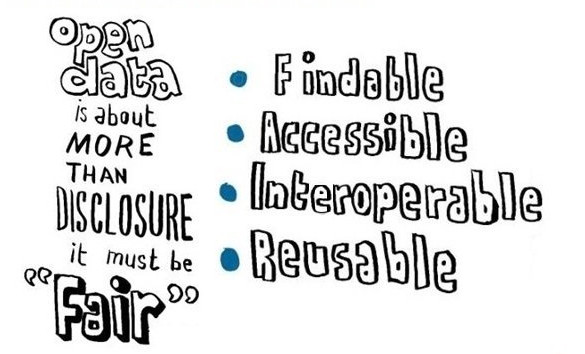

Speaking about data standards, tool interoperability, data integration and synthetic biology, a.k.a “Why we need standards”. They would like to promote interoperability and data exchange between their own tools (important!) as well as other tools. They’d also like to facilitate data integration to inform the design of biological systems both from a manual designer’s perspective and from the POV of what is necessary for computational tool use. They’d also like to enable the iterative exchange of data and experimental protocols in the synthetic biology life cycle.

A description of some of the tools developed in Neil’s group (and elsewhere) exemplify the differences in data structures present within synthetic biology. BacilloBricks was created to help get, filter and understand the information from the MIT registry of standard parts. They also created the Repository of Standard Virtual Biological Parts. This SVP repository was then extended with parts from Bacillus and was extended to make use of SBML as well as CellML. This project is called BacilloBricks Virtual. All of these tools use different formats.

It’s great having a database of SVPs, but you need a way of accessing and utilizing the database. Hallinan and Wipat have started a collaboration with Microsoft Research with the people who created a programming language for genetic engineering of living cells called the genetic engineering of cells (GEC) simulator. Some work a summer student did created a GEC compiler for SVPs from BacilloBricks virtual. Goksel has also created the MoSeC system where you can automatically go from a model to a graph to a EMBL file.

They also have BacillusRegNet, which is an information repository about transcription factors for Bacillus spp. It is also a source of orthogonal transcription factors for use in B. subtilis and Geobacillus. Again, it is very important to allow these tools to communicate efficiently.

The data warehouse they’re using is ONDEX. They feed information from the ONDEX data store to the biological parts database. ONDEX was created for systems biology to combine large experimental datasets. ONDEX views everything as a network, and is therefore a graph-based data warehouse. ONDEX has a “mini-ontology” to describe the nodes and edges within it, which makes querying the data (and understanding how the data is structured) much easier. However, it doesn’t include any information about the synthetic biology side of things. Ultimately, they’d like an integrated knowledgebase using ONDEX to provide information about biological virtual parts. Therefore they need a rich data model for synthetic biology data integration (perhaps including an RDF triplestore).

Interoperabiligy, Design and Automation: why we need standards.

Requirement 1. There needs to be interoperability and data exchange among these tools as well as among these tools and other external tools. Requirement 2. Standards for data integration aid the design of synthetic systems. The format must be both computationally amenable and useful for humans. Requirement 3. Automation of the design and characterization of synthetic systems, and this also requires standards.

The requirements of synthetic biology research labs such as Neil Wipat’s make it clear that standards are needed.

KEYNOTE: Herbert Sauro – University of Washington, US

Herbert Sauro described the developing community within synthetic biology, the work on standards that has already begun, and the Synthetic Biology Open Language (SBOL).

He asks us to remember that Synthetic Biology is not biology – it’s engineering! Beware of sending synthetic biology grant proposals to a biology panel! It is a workflow of design-build-test. He’s mainly interested in the bit between building and testing, where verification and debugging happens.

What’s so important about standards? It’s critical in engineering, where if increases productivity and lowers costs. In order to identify the requirement you must describe a need. There is one immediate need: store everything you need to reconstruct an experiment within a paper (for more on this see the Nature Biotech paper by Peccoud et al. 2011: Essential information for synthetic DNA sequences). Currently, it’s almost impossible to reconstruct a synthetic biology experiment from a paper.

There are many areas requiring standards to support the synthetic biology workflow: assembly, design, distributed repositories, laboratory parts management, and simulation/analysis. From a practical POV, the standards effort needs to allow researchers to electronically exchange designs with round tripping, and much more.

The standardization effort for synthetic biology began with a grant from Microsoft in 2008 and the first meeting was in Seattle. The first draft proposal was called PoBoL but was renamed to SBOL. It is a largely unfunded project. In this way, it is very similar to other standardization projects such as OBI.

DARPA mandated 2 weeks ago that all projects funded from Living Foundries must use SBOL.

SBOL is involved in the specification, design and build part of the synthetic biology life cycle (but not in the analysis stage). There are a lot of tools and information resources in the community where communication is desperately needed.

SBOL Semantic, SBOL Visual, and SBOL Script. SBOL Semantic is the one that’s going to be doing all of the exchange between people and tools. SBOL Visual is a controlled vocabulary and symbols for sequence features.

Have you been able to learn anything from SBML/SBGN, as you have a foot in both worlds? SBGN doesn’t address any of the genetic side, and is pretty complicated. You ideally want a very minimalistic design. SBOL semantic is written in UML and is relatively small, though has taken three years to get to this point. But you need host context above and beyond what’s modelled in SBOL Semantic. Without it, you cannot recreate the experiment.

Feature types such as operator sites, promoter sites, terminators, restriction sites etc can go into the sequence ontology (SO). The SO people are quite happy to add these things into their ontology.

SBOLr is a web front end for a knowledgebase of standard biological parts that they used for testing (not publicly accessible yet). TinkerCell is a drag and drop CAD tool for design and simulation. There is a lot of semantic information underneath to determine what is/isn’t possible, though there is no formal ontology. However, you can semantically-annotate all parts within TinkerCell, allowing the plugins to interpret a given design. A TinkerCell model can be composed of sub-models. Makes it easy to swap in new bits of models to see what happens.

WikiDust is a TinkerCell plugin written in Python which searches SBPkb for design components, and ultimately uploads them to a wiki. LibSBOLj is a library for developers to help them connect software to SBOL.

The physical and host context must be modelled to make all of this useful. By using semantic web standards, SBOL becomes extensible.

Currently there is no formal ontology for synthetic biology but one will need to be developed.

Please note that the notes/talks section of this post is merely my notes on the presentation. I may have made mistakes: these notes are not guaranteed to be correct. Unless explicitly stated, they represent neither my opinions nor the opinions of my employers. Any errors you can assume to be mine and not the speaker’s. I’m happy to correct any errors you may spot – just let me know!

You must be logged in to post a comment.